In conversations about hardware, the focus is usually shifted toward the processor. That is logical, but in practice performance depends not so much on raw computing power as on whether the system can keep “feeding” that processor with data fast enough. When RAM cannot keep up, an expensive multi-core chip simply sits idle waiting for the next portion of information.

This becomes especially noticeable in work with VPS environments, high-load databases, or virtualization systems. In such scenarios, RAM speed turns into the exact factor that either allows a system to “fly” or turns it into a constant source of delays.

From SDRAM to the First Steps of DDR

The first personal computers relied on SDRAM. At the time, synchronizing memory with the system bus was a breakthrough, but the available resources stopped being enough rather quickly. As soon as processors started gaining muscle, the old memory became too cramped for them.

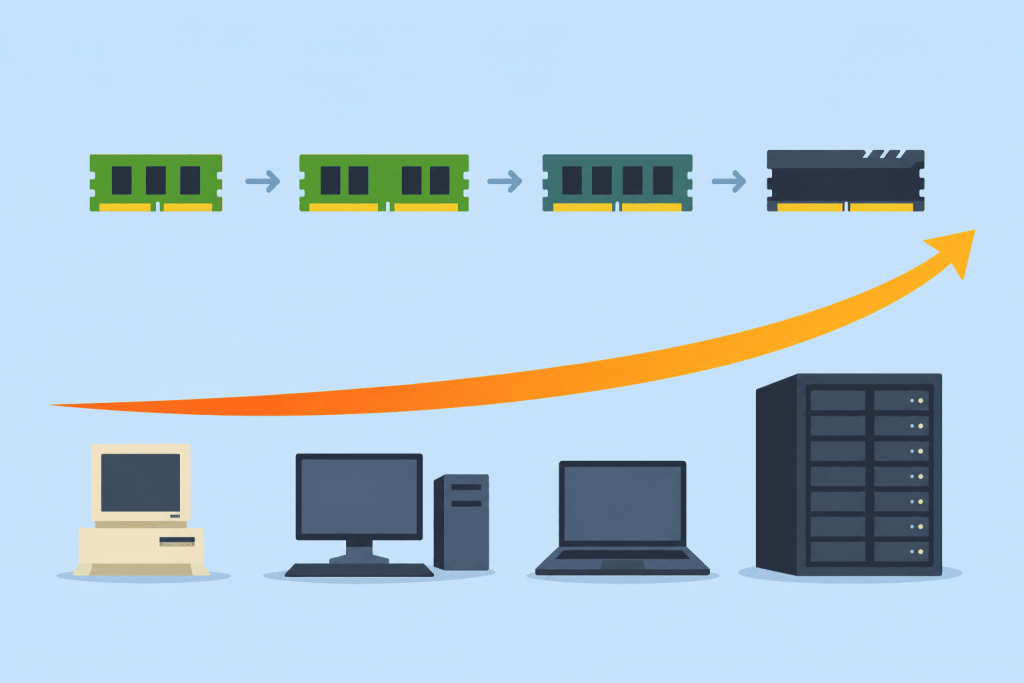

The solution came in the form of DDR (Double Data Rate) technology. The idea was simple but effective: transfer data twice during a single clock cycle. This made it possible to double the speed without requiring extremely high frequencies from the memory chips themselves. Then came the evolution of versions – from the second generation to the fifth. Each new iteration did not just add bigger benchmark numbers, but gradually reduced power consumption and increased module density, which is critical for stability under constant load.

Why a New Generation Is Not Just About Numbers

For a home user, moving from one memory standard to another often looks like a nice but not always essential bonus. In a server rack, though, the situation is different. When a server is simultaneously handling thousands of sessions or processing massive SQL queries, memory bandwidth becomes the main limiting factor.

Earlier, we often ran into the “bottleneck” effect: the processor is ready to work, but the data exchange channel is overloaded. The transition to DDR4 once helped partially remove this barrier, while today’s DDR5 changes the rules completely, especially for multi-core architectures where every thread competes for access to resources.

The Relevance of DDR5 in Modern Hardware

Today DDR5 is no longer something exotic, but the standard for new server solutions. The key advantage here is not even the raw megahertz numbers, but the redesigned architecture of the module itself. It distributes information flows much more efficiently, allowing modern CPUs with a large number of cores to avoid “starvation.”

Data centers are moving to this standard on a massive scale. For services working with enormous real-time data streams, this is a matter of survival. The speed-access barrier that restrained scaling for years has become significantly higher.

DDR6 Horizons and Future Demands

Even though DDR5 has not yet reached its full potential, the industry is already preparing the ground for DDR6. The amount of data generated by modern neural networks and analytics systems is growing faster than hardware itself can evolve.

Developers are planning to nearly double data transfer speeds by moving to new subchannel designs. The artificial intelligence sector is the main driver of this process; memory there has always been and still remains a critical resource. The target for mass adoption is the end of the decade, roughly 2028-2029, although technology testing is already underway.

A New Perception of Memory

The times when RAM was just a box where you could “throw in another stick” are gone. Now it is a complex component that determines the viability of the entire infrastructure – from the stability of virtual machines to rendering speed or transaction processing. While everyone looks at the number of cores inside a processor, it is the evolution of RAM that quietly and almost invisibly provides the level of scalability we have become used to.

Leave a Reply